Neural Networks for Human Action Recognition

Based on Multi-channel and Multi-modality Data

Abstract

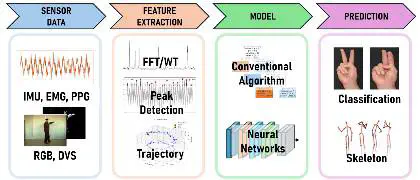

This project focuses on human action recognition (HAR) using data from multiple sensors and channels.

While traditional HAR methods primarily rely on a single modality, such as RGB video or inertial sensors, combining multiple modalities—including RGB, depth, IMU, and event-based sensors—enables the capture of richer spatial, temporal, and motion information.

In this project, we design neural network-based models capable of processing multi-channel and multi-modality data, and explore strategies for effective feature fusion and temporal modeling to improve recognition accuracy and robustness.

Key Features

- Multi-modality Integration: RGB, Depth, IMU, Event-based sensors

- Rich Information Capture: Spatial, temporal, and motion data

- Advanced Fusion: Effective feature fusion strategies

- Robust Recognition: Improved accuracy through multi-channel processing

Research Focus

- Multi-channel neural network architectures

- Feature fusion techniques

- Temporal modeling strategies

- Real-time action recognition systems