Neuromorphic Control of Robotic Manipulators

Using Spiking Neural Networks with Reinforcement Learning

Abstract

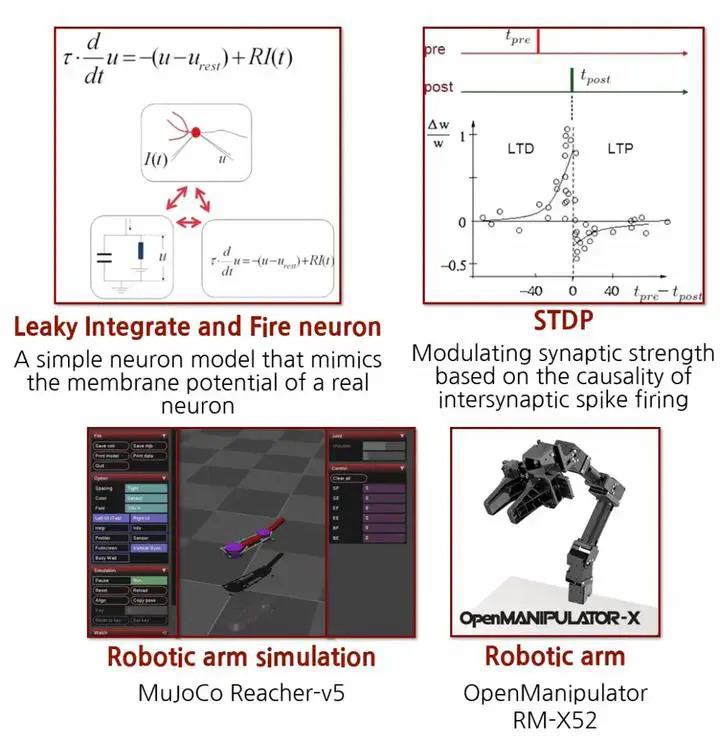

Our research at the Human Brain Neurocomputing Platform Research Center focuses on developing energy-efficient and biologically-inspired robotic control systems using spiking neural networks (SNNs).

Unlike traditional artificial neural networks (ANNs) that suffer from high energy consumption and real-time processing limitations, SNNs mimic biological neurons’ spike-based information processing mechanisms, offering superior energy efficiency and temporal dynamics suitable for real-time applications.

We are currently developing a neuromorphic hardware-friendly reward-modulated spike timing-dependent plasticity (R-STDP) framework integrated with twin delayed deterministic policy gradient (TD3) reinforcement learning algorithms for 3-degree-of-freedom robotic arm control.

This approach simplifies complex neuromorphic learning schemes while enabling on-chip online learning capabilities with significantly reduced computational overhead compared to traditional backpropagation methods. Our work aims to bridge the gap between biological neural computation and practical robotic applications, demonstrating that SNN-based systems can achieve robust adaptive control while maintaining the ultra-low power consumption characteristics essential for next-generation autonomous systems and edge computing applications.

Key Features

- Energy Efficiency: Ultra-low power consumption through spike-based processing

- Biologically Inspired: Mimics natural neural computation

- Real-time Control: Suitable for time-critical robotic applications

- On-chip Learning: R-STDP enables online learning on neuromorphic hardware

Keywords

- Spiking Neural Networks (SNNs)

- Reward-modulated STDP (R-STDP)

- Twin Delayed Deterministic Policy Gradient (TD3)

- 3-DOF Robotic Arm Control

Description

Our research at the Human Brain Neurocomputing Platform Research Center focuses on developing energy-efficient and biologically-inspired robotic control systems using spiking neural networks (SNNs). Unlike traditional artificial neural networks (ANNs) that suffer from high energy consumption and real-time processing limitations, SNNs mimic biological neurons’ spike-based information processing mechanisms, offering superior energy efficiency and temporal dynamics suitable for real-time applications. We are currently developing a neuromorphic hardware-friendly reward-modulated spike timing-dependent plasticity (R-STDP) framework integrated with twin delayed deterministic policy gradient (TD3) reinforcement learning algorithms for 3-degree-of-freedom robotic arm control. This approach simplifies complex neuromorphic learning schemes while enabling on-chip online learning capabilities with significantly reduced computational overhead compared to traditional backpropagation methods. Our work aims to bridge the gap between biological neural computation and practical robotic applications, demonstrating that SNN-based systems can achieve robust adaptive control while maintaining the ultra-low power consumption characteristics essential for next-generation autonomous systems and edge computing applications.